The Productivity Paradox

Why Buying 'Smarter Tools' Often Fails

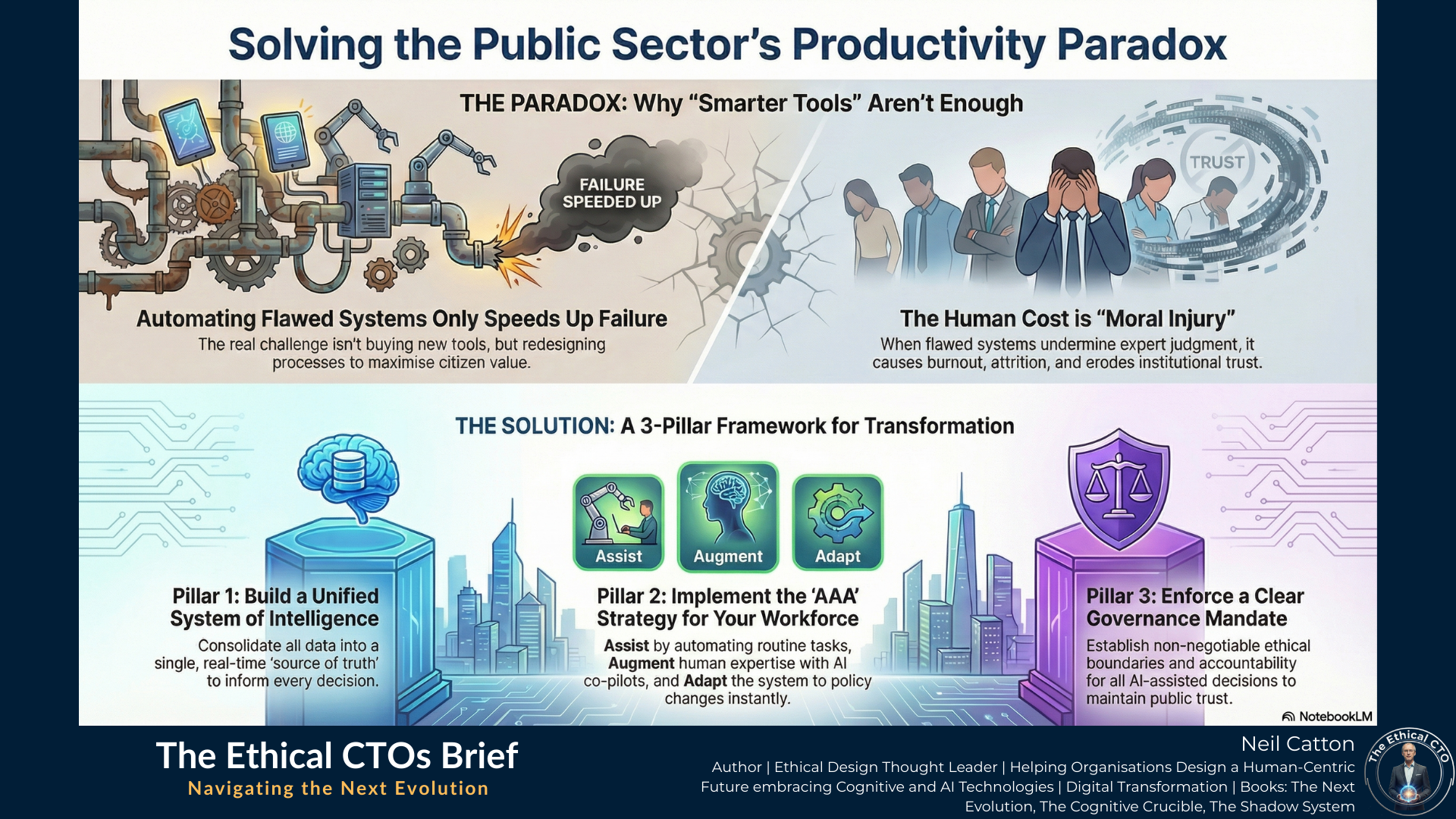

In this briefing, I explore why the acquisition of "Smarter Productivity Tools" often fails to deliver real value. I introduce the Productivity Paradox - where automating flawed processes simply digitises friction - and offer a structural solution: the AAA Strategy (Assist, Augment, Adapt). By shifting from disjointed tool stacks to a unified System of Intelligence, leaders can maximise citizen impact while preventing the Moral Injury of a front-line workforce constrained by unintelligent systems.

The Paradox of Digital Effort

The modern public sector faces a crippling squeeze. Rising citizen expectations and increasingly complex societal challenges clash with stringent budget constraints and often outdated operational frameworks. Consequently, the prevailing narrative is one of technological salvation. This advocates for the rapid deployment of “Smarter Productivity Tools” (SPT), a broad category encompassing everything from generative AI and intelligent automation to advanced data analytics and modern collaboration platforms.

However, this strategy, without fundamental systemic change, creates a significant and costly paradox. Automating inherently inefficient or flawed processes doesn’t lead to true transformation; it simply speeds up our failures. The goal isn’t simply to do the wrong things faster. The real challenge isn’t acquiring tools but achieving the necessary structural transformation to maximise Citizen Value and Caseworker Impact. Current implementations focus on a superficial “faster-horse” solution, aiming only for metrics like reduced admin time or quicker responses. The Next Evolution requires a strategic re-imagining of the entire operating model, with technology serving as the structural framework for an intelligent responsive and ethical public service.

This analysis outlines the three essential requirements for unlocking genuine productivity: a unified intelligence architecture, a structured framework for human augmentation and a robust mandate for ethical AI delegation and governance.

Moving Beyond Disjointed Tool Stacks

To move from simple transactional efficiency to significant transformation, public sector organisations must abandon the idea of a fragmented application stack and commit to creating a unified adaptive System of Intelligence. This system isn’t a separate application for staff; it should be an integrated learning and adaptive partner seamlessly embedded into daily workflows. It should continuously refine itself based on operational outcomes.

The success of a Smarter Productivity Tool (SPT) hinges less on its individual features and more on its ability to harness and utilise critical real-time context from a centralised ecosystem. Three essential pillars underpin this architecture:

- Unified Data Fabric: This concept extends beyond simple data warehousing. A true Data Fabric serves as a conceptual layer that consolidates various data sources like citizen records, historical case files, policy documents, and operational metrics into a single trustworthy source. This ensures every AI tool, caseworker, and departmental decision draws from the same real-time source of truth eliminating the friction caused by data silos.

- Intelligent Connection and Real-Time Data (IoT): Crucially, the System of Intelligence must extend its reach beyond internal records to include Intelligent Connection and the Internet of Things (IoT). By integrating data from smart city infrastructure, connected health monitors, and environmental sensors the system can collect real-time ambient information. This capability enables an “In The Moment” strategy shifting the service’s approach from reactive problem-solving to adaptive predictive and immediate intervention ensuring resources are deployed precisely where and when needed.

- Adaptive Learning Loops: This is the system’s cognitive engine. SPTs must automate tasks but also learn from human input. This involves actively capturing and analysing human override decisions – instances where a caseworker rejects or modifies an AI suggestion. By feeding these valuable human judgements back into the system, the underlying AI models are continually refined, driving an upward spiral of competence and accuracy.

Operationalising Intelligence: The New Tools

Within this architecture, the function of Smarter Tools changes:

- Intelligent Collaboration: Secure, integrated platforms automatically apply the correct compliance and auditing trails to documents and communications. This makes governance an inherent feature rather than an administrative burden.

- Cognitive Automation (CA): This represents a leap beyond basic Robotic Process Automation (RPA). CA employs Machine Learning and Natural Language Processing (NLP) to interpret unstructured data – the vast and often chaotic input of government work such as complex emails, citizen complaint letters and verbal case notes. This allows them to flag contextual risks and automatically classify the urgency of service requests.

- Predictive Insight Tools: These systems utilise unified data to create real-time models predicting future demand for specific and often crucial services like social care placements and housing needs. This dynamic approach automatically adjusts resource allocations, moving away from traditional static budgeting models.

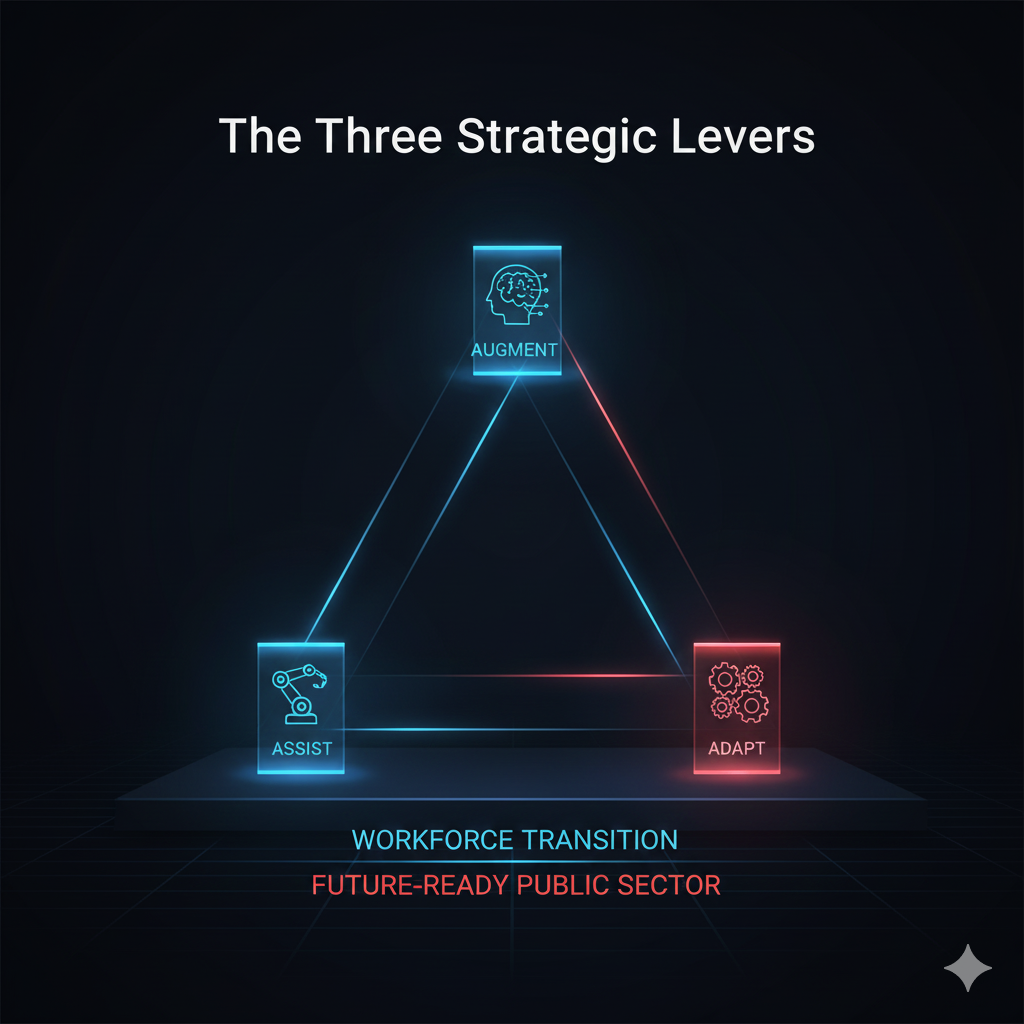

The Three Strategic Levers

Building on this architectural foundation, a successful transition of the public sector workforce demands a clear formal implementation framework. Without it tools are simply adopted based on internal preferences rather than strategic value. This is where I bring int the AAA Strategy: Assist, Augment and Adapt.

Assist (The Zero-Touch Workflow)

The first lever focuses on maximising elimination and Intelligent Automation (IA). The strategic goal is to design workflows so robustly that employees don’t need to touch tasks at all – the Zero-Touch principle.

- Focus: Routine, highly structured, rule-based or high-volume tasks encompass the full spectrum of Intelligent Automation including Business Process Automation (BPA), Robotic Process Automation (RPA) and Cognitive Automation. These technologies are designed to manage complex multi-system processes with minimal human intervention.

- Strategic Outcome: Reclaiming human capacity involves automating administrative tasks. This frees up thousands of hours of caseworker time for more complex relationship-based and nuanced work. For example, Generative AI can automatically draft 80% of Freedom of Information (FOI) requests. This leaves only the final sensitive 20% needing review and approval by a human subject matter expert. This fundamentally shifts the employee’s role from originator to editor and approver.

Augment (The Intelligent Co-Pilot)

This is the most advanced and demanding application of SPT, prioritising human enhancement over replacement. Augmentation transforms raw data into highly contextual actionable intelligence delivered through an Intelligent Co-Pilot.

- Focus: Human decision-making, managing case complexity, and high-level strategic planning.

- Strategic Outcome: Transforming a general caseworker into a specialised strategic expert involves AI analysing thousands of historical cases, cross-referencing policy changes and modelling potential scenarios. Crucially, this drives Moral Foresight – the ability of the human worker to anticipate the long-term consequences of their decisions. This ensures ethical and sustainable service outcomes. This partnership allows the human to focus on empathy, citizen communication and ethical judgement, guided by data-driven insights.

Adapt (Adaptive Competency)

The third lever fosters organisational resilience and institutional memory. Rather than relying solely on periodic training, the system itself becomes the primary, living method for maintaining competency in a constantly changing legislative landscape.

- Focus: Rapid policy changes, knowledge transfer across teams, and maintaining critical skills in response to external shifts.

- Strategic Outcome: Systemic resilience and instant compliance are key. When a new policy or government regulation is introduced, the underlying AI models – the System of Intelligence – instantly adjust their guidance, case suggestions, and automation routines. This significantly reduces the need for cumbersome, expensive, and often ineffective mass training cycles. It ensures true business continuity during disruptions and guarantees all employees are working to the latest standard immediately.

The Human Cost: Moral Injury and the Erosion of Trust

While efficiency is important, it should never come at the expense of the psychological well-being of frontline workers. Neglecting to strategically implement the AAA framework especially the Augment principle leads to a significant human cost. Deploying SPTs without addressing underlying process issues often results in increased complexity and forces employees into rigid unintelligent systems.

The core threat here is moral injury. Caseworkers enter public service driven by a desire to help citizens, exercise professional judgement, and apply empathy. However, when their judgement is systematically undermined by poorly designed systems – where AI provides incorrect suggestions but workflow forces them to proceed – they experience deep psychological conflict. This leads to burnout attrition and erosion of professional trust in the institution. Consequently, true productivity is impossible if the organisation alienates those responsible for complex decisions. Strategic deployment of SPTs must explicitly aim to reduce moral injury by empowering rather than constraining human experts.

This human and institutional risk highlights the most crucial strategic requirement: governance. Technology adoption alone isn’t enough. When Smarter Productivity Tools enhance sensitive decisions, we face the complex issue of AI delegation. Public trust, the cornerstone of public service, is fragile. Leaders must grapple with the question: who holds accountability when the Intelligent Co-Pilot’s predictive model leads to a poor or inequitable outcome for a citizen?

The Governance Mandate: Navigating AI Delegation and Ethics

To uphold trust and deliver ethical services, public sector leaders must implement a clear and comprehensive Governance Mandate. This mandate should be centred around four key pillars.

- Define the Ethical Perimeter: Clearly defined, non-negotiable boundaries for decision autonomy are essential. Leaders must formally establish when decisions must remain entirely human-led, such as final sanctioning welfare withdrawal and high-risk child protection. Conversely, they should also define when AI can reliably assist. This crucial step reinforces digital ethics principles, ensuring AI serves as a supportive tool rather than a replacement for accountability.

- Mandate Auditability and Transparency: Every action, data input and recommendation from a SPT, particularly those in the Augment category must have a clear unalterable audit trail. This transparency is essential for compliance accountability and enabling citizens or caseworkers to challenge and understand the decision’s basis.

- The Humanity Challenge (Digital Humans): Interactive AI avatars, or Digital Humans, provide the appealing convenience of 24/7 high-volume interaction. However, governance must ensure efficiency doesn’t compromise essential human empathy. When interacting with an intelligent avatar, the service should clearly signpost an immediate pathway to a human operative. This maintains a focus on compassion and respects citizens’ right to human connection, especially for personal or sensitive matters.

- Equitable Access and Choice: Technology should never be imposed on citizens. Public services are inherently for everyone regardless of digital literacy or access. Therefore the Governance Mandate must explicitly guarantee alternative robust non-digital access routes. This prevents systemic digital exclusion, reduces negative citizen sentiment and respects the right to choose how to access essential services – whether digitally over the phone or in person.

- Cyber Resilience in the Workflow: When intelligent tools connect to sensitive citizen data, they create new interconnected entry points for risk. As a core element of systems thinking, organisations must adopt a robust cyber resilience model. This goes beyond traditional perimeter defence, which simply aims to keep bad actors out, and acknowledges that system compromise is inevitable. Instead, the focus shifts to rapid intelligent detection and recovery, ensuring essential public services can continue operating and recover swiftly from any breach.

A Final Word

Smarter productivity tools are far more than just a simple IT budget item; they’re the strategic lever needed for public service transformation in the 21st century. The ongoing failure of many digital initiatives isn’t due to flawed software but rather the institutional reluctance to rethink the core role of the human employee.

To overcome the productivity paradox, public sector institutions must redefine their most valuable asset. The future public sector employee isn’t a data entry specialist, administrator, or process follower, but a System Navigator. This empathetic, high-judgement expert’s primary professional value lies in exercising nuanced discretion, managing ethical boundaries, and fostering human relationships with citizens. They’ll delegate the mechanical predictable and analytical tasks entirely to machines.

By anchoring their transformation in the System of Intelligence, adopting the actionable AAA Framework and enforcing a governance model based on ethical delegation and equitable access, public sector institutions can finally overcome the friction and stagnation of the past. This path unlocks the genuine capacity needed to meet rising citizen demands without compromising the quality security or ethical compass that defines public service. This systemic shift – understanding the structural implications of AI and preparing organisations for The Next Evolution – is the ultimate challenge for every strategic leader today.

Key Takeaways: Breaking the Productivity Paradox

The Force Multiplier Trap: Automation isn't a cure; layering AI over flawed processes just helps you do the wrong things faster.

The AAA Strategy: A formal framework for tech adoption: Assist (Zero-Touch), Augment (Co-Pilots), and Adapt (Systemic Memory).

Moral Injury: Poorly designed AI systems that override human judgment cause deep psychological conflict and caseworker attrition.

System of Intelligence: Moving from disjointed tool stacks to a unified, adaptive data fabric that learns from human input

Strategic Insights: Operationalising Intelligence

Zero Touch Principle: Strategic automation of routine tasks to reclaim thousands of caseworker hours for high-value human work.

Human Augmentation: Using AI to drive Moral Foresight, allowing experts to anticipate long-term consequences of complex decisions.

Adaptive Competency: The System itself becomes the method for maintaining compliance, eliminating expensive and slow training cycles.

The System Navigator: The future roles of the employee - moving data entry to high-judgement ethical oversight.

Video Summary: The Ethics of Delegation

The Ethical Perimeter: Defining non-negotiable boundaries where decisions (like welfare withdrawal) must remain 100% human-led.

Auditability & Transparency: Ensuing every AI recommendation has an unalterable trail for accountability and citizen challenge.

The Humanity Challenge: Why digital human and avatars must always provide a fast-track to real human connection.

Cyber Resilience: Shifting from "permieter defence" to "intelligent recovery" in the era of interconnected AI data fabrics.

Solving the paradox allows us to shift from mere efficiency to the ultimate goal: the Time-Zero Organisation.

The Ethical CTO: Arc 1 Index

- Transformation: Digital Transformation

- Diagnosis: The Legacy Trap

- Efficiency: The Productivity Paradox

- Velocity: The Time-Zero Organisation

- Governance: Strategy of Designed Chaos

- Orchestration: Executive Coherence

- Impact: The Digital Catalyst