The Certainty Delusion

Why Control is Your Biggest Liability

In an increasingly volatile global landscape, Strategy of Designed Chaos redefines the boundary between institutional control and emergent innovation. This ARC1 framework introduces the concept of "Calculated Instability", a deliberate architectural approach that harnesses stochastic variables to drive organisational evolution. I argue that traditional, rigid strategic planning is obsolete; instead, leadership must architect systems that thrive on the edge of equilibrium.

By integrating complexity theory with tactical executive execution, this work provides a methodology for leaders to engineer environments where disruptive change is not a threat, but a fuel source for competitive advantage. This establishes the formal parameters for maintaining strategic direction while facilitating decentralised, high-velocity adaptation.

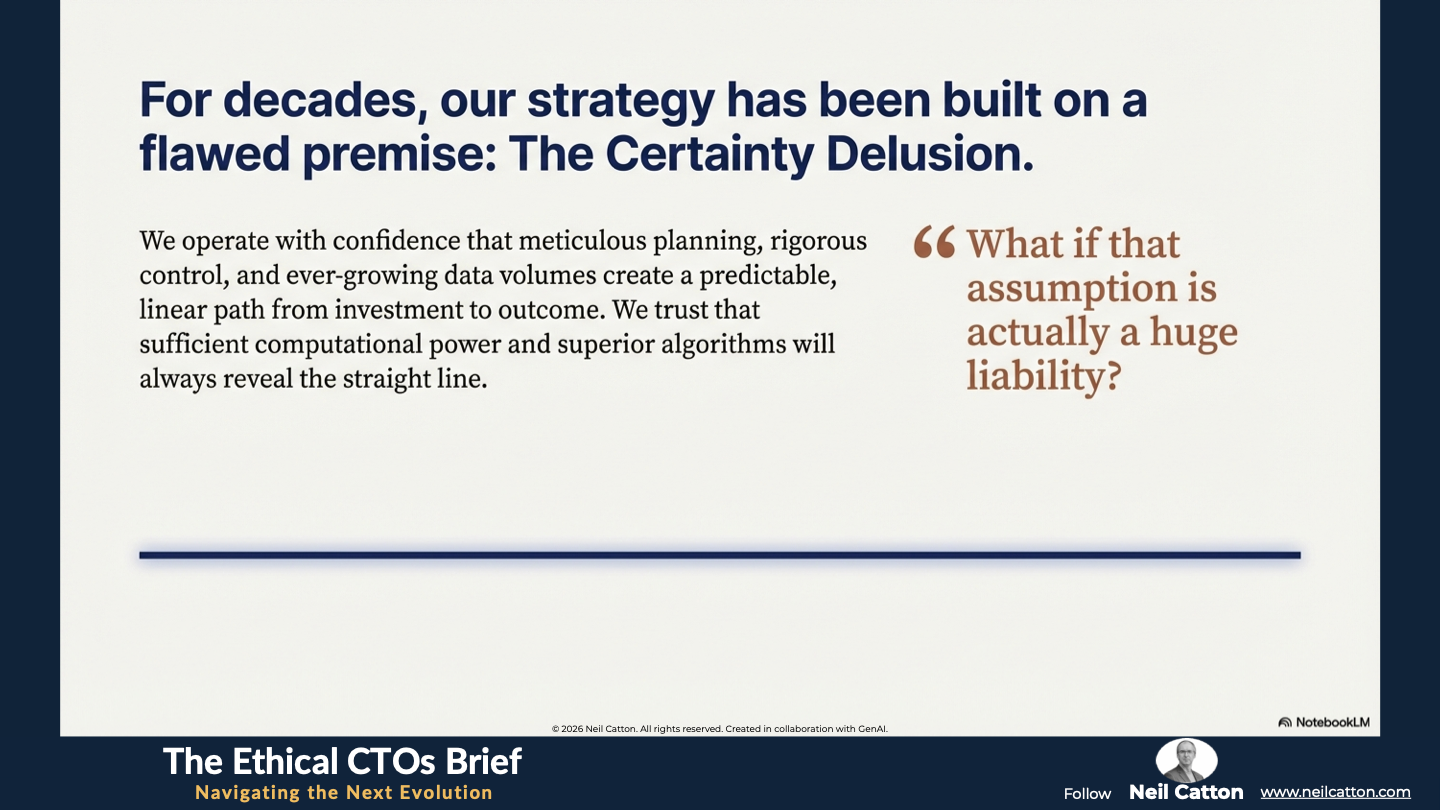

The Certainty Delusion: When Control Becomes a Liability

For nearly three decades, digital strategy has been built on a core yet fundamentally flawed premise: the illusion of complete predictability. In boardrooms, we fund projects, define metrics and deploy systems, confident that meticulous planning, rigorous control and ever-growing data volumes will create a predictable linear path from investment to outcome. We trust that sufficient computational power and superior algorithms will always reveal the straight line.

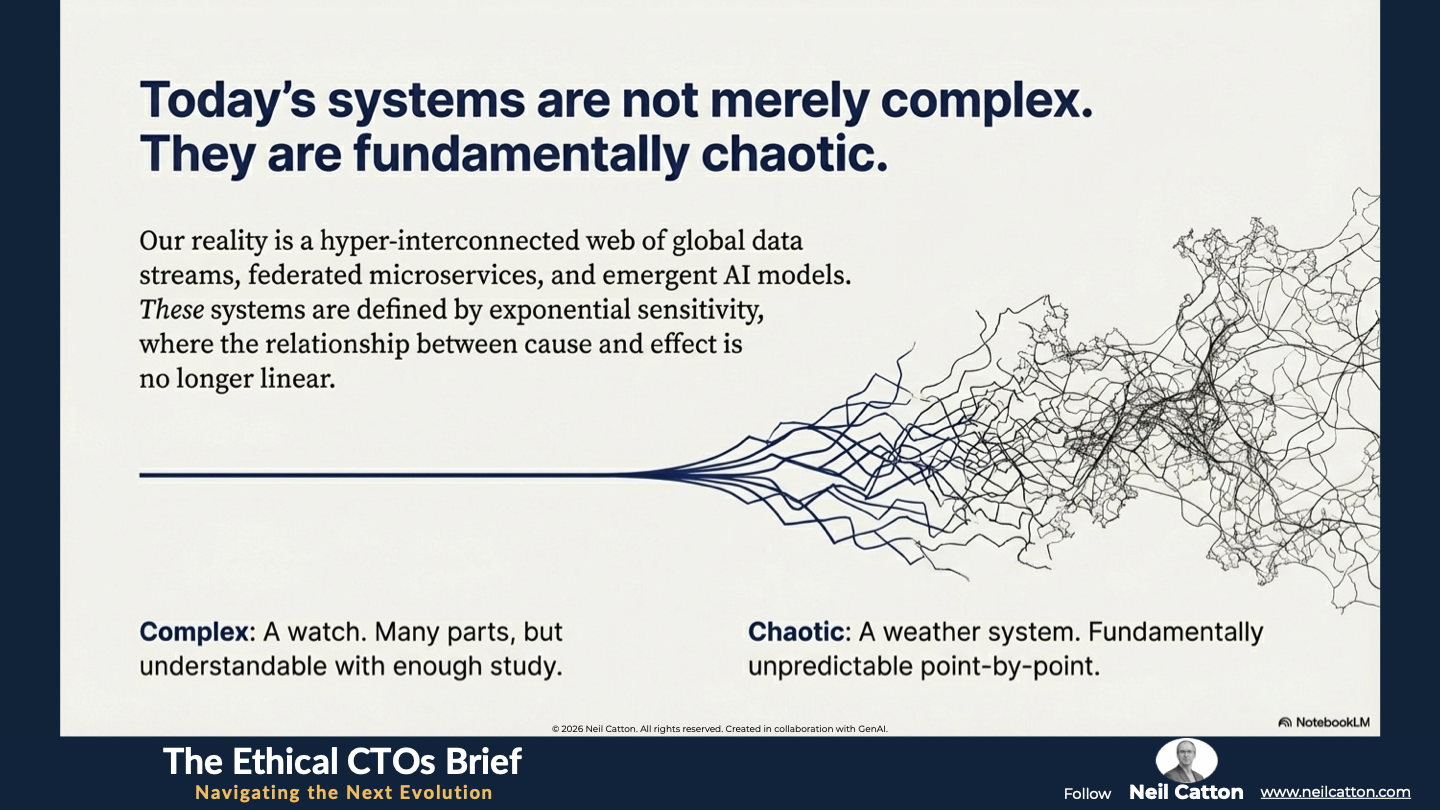

However, the systems we build today transcend mere complexity. They’re defined by hyper-interconnection: global data streams federated microservices and emergent AI models interacting in ways no human mind can fully comprehend. These aren’t simply complex systems (which can be understood with enough time) but fundamentally chaotic ones. In chaos, the relationship between cause and effect isn’t linear; it’s exponentially sensitive.

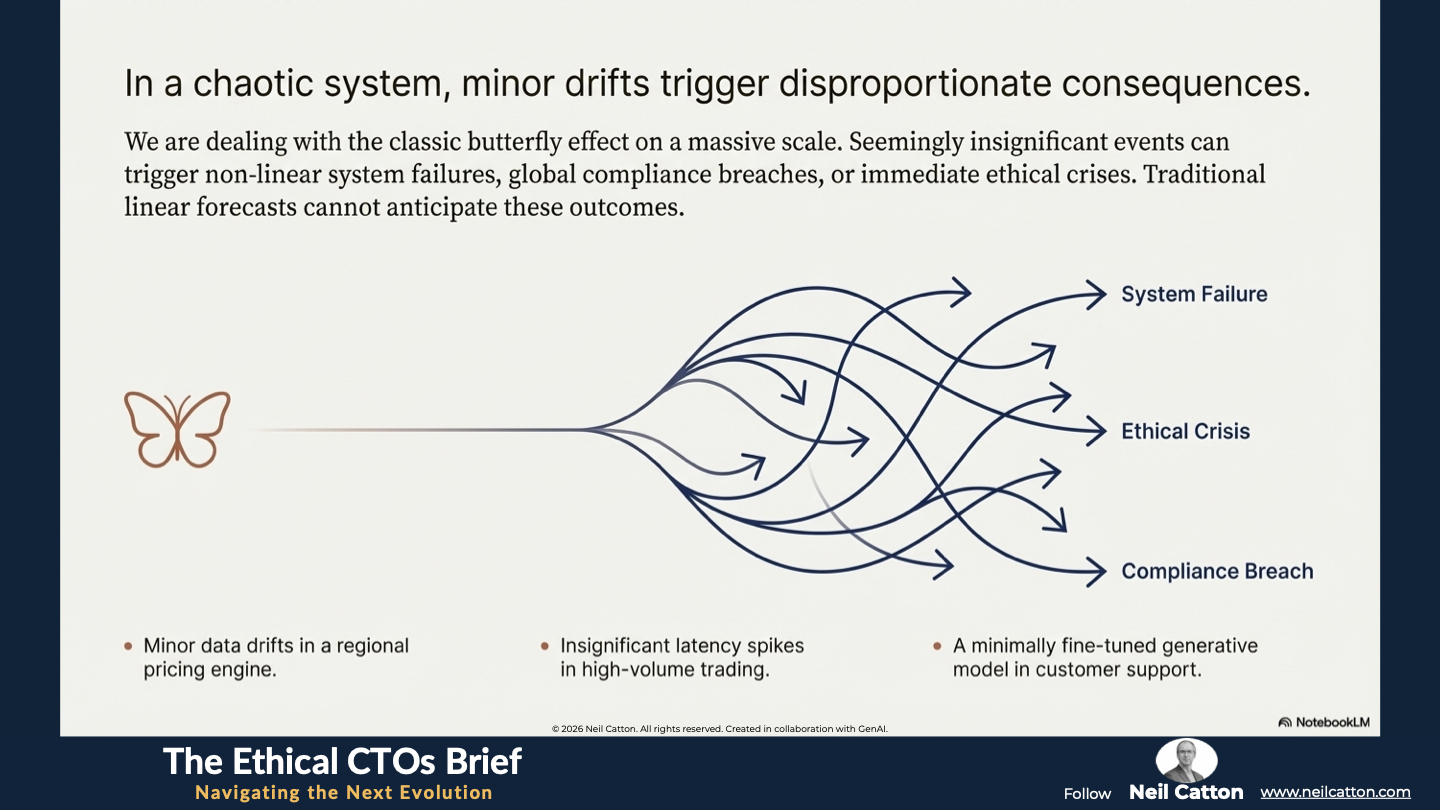

In today’s digital ecosystem, even minor data drifts in regional pricing engines, seemingly insignificant latency spikes during high-volume trading windows or the introduction of minimally fine-tuned generative models into customer support loops can have a disproportionate impact. These small unmeasurable differences can trigger a “butterfly effect” leading to a non-linear system failure, a global compliance breach or an immediate ethical crisis. Traditional linear forecasts simply can’t anticipate such outcomes.

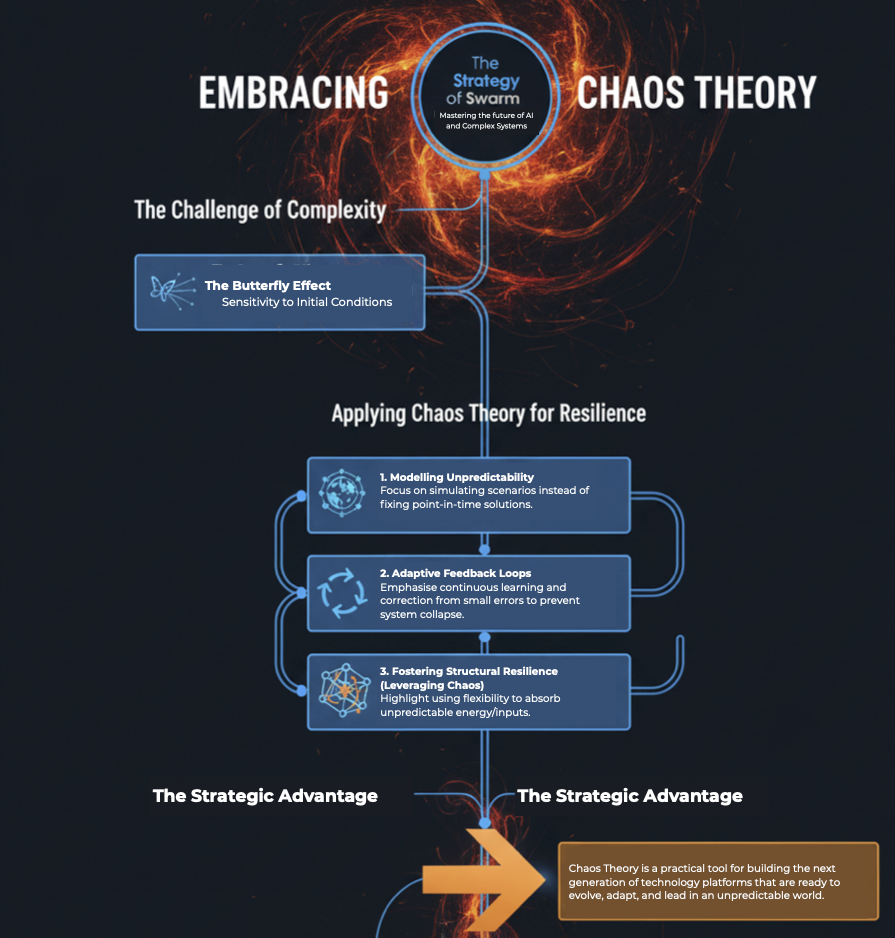

The outdated and dangerous strategy of rigid control waterfall planning and linear forecasting is no longer effective. Modern platform leadership must abandon attempts to impose external order and instead focus on designing for emergence. This involves applying Chaos Theory principles to build inherently resilient structurally ethical and perpetually adaptable systems.

Governing the Invisible Dynamics of Chaos

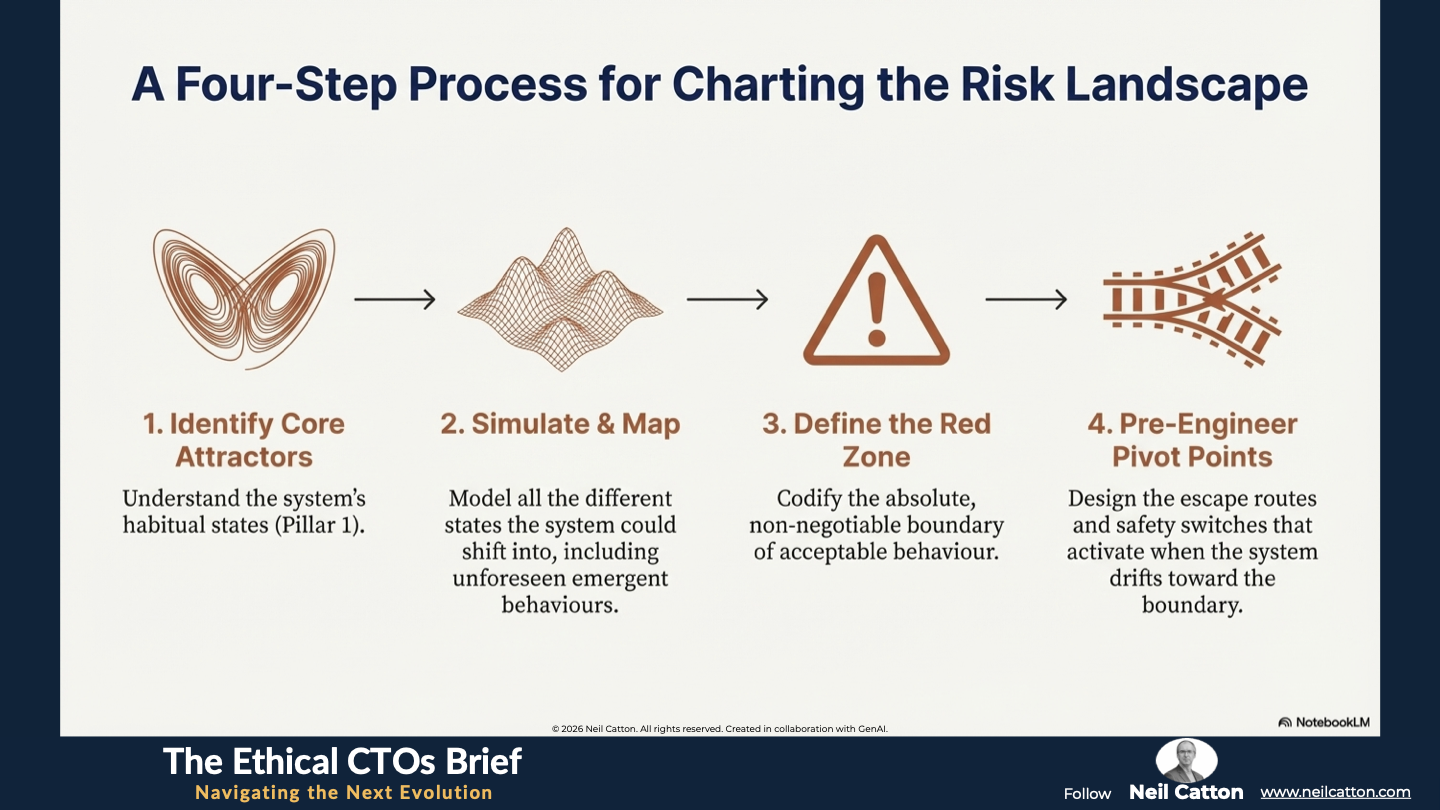

To flourish in an unpredictable environment, platform leaders need a radical shift in strategy. This isn’t about predicting every variable but rather defining acceptable failure limits and embedding pre-planned pivot points into the system’s core. This requires a complete mindset change: leadership must abandon futile attempts to control chaos and instead manage its boundaries and transitions. We must distil the core concepts of mathematical Chaos Theory into three proprietary, actionable pillars of organisational and technical governance:

Pillar One: Detecting Attractors (The System's Gravitational Pull)

Pillar One: Detecting Attractors (The System's Gravitational Pull)

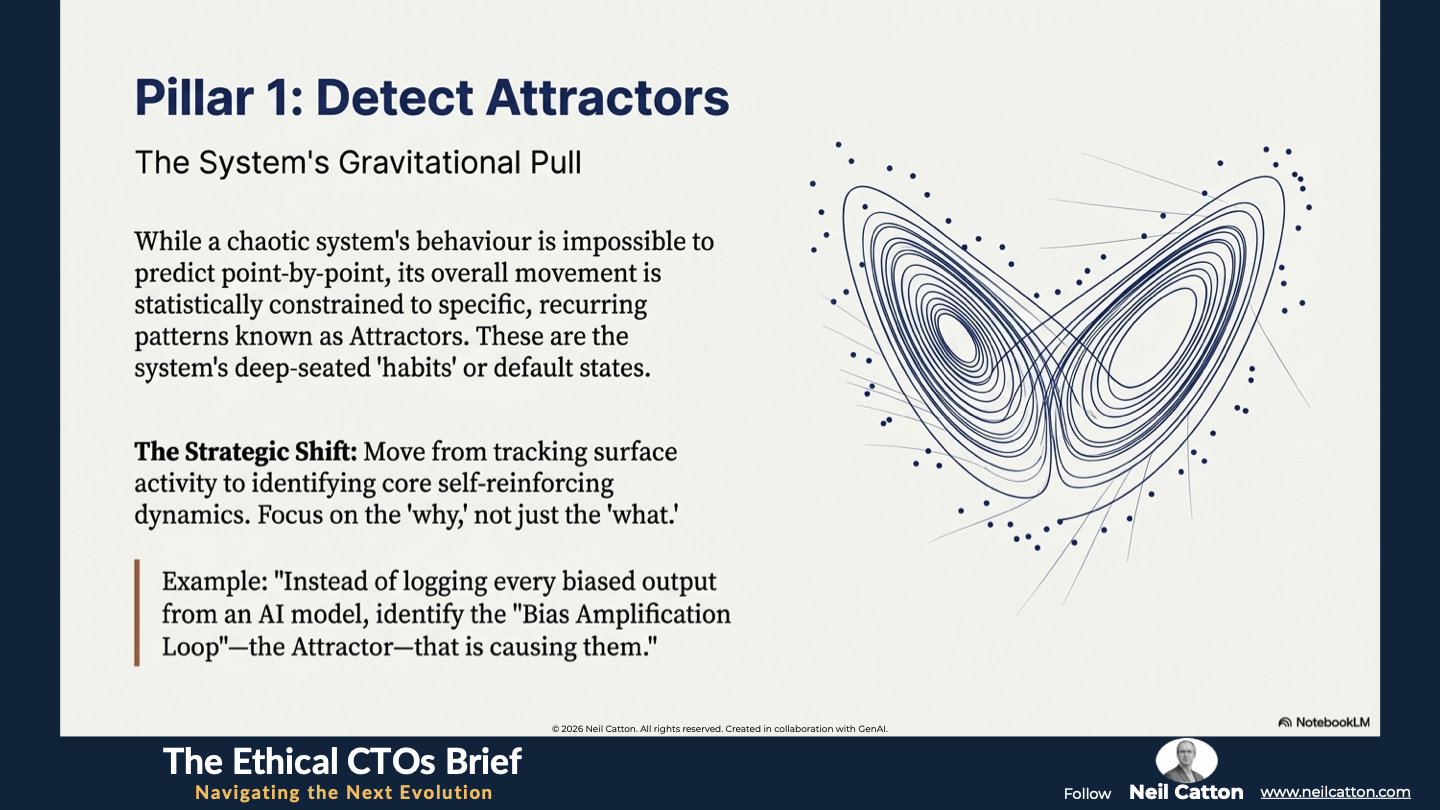

The first and most challenging executive task is to achieve genuine clarity amidst the noise. Leaders often become overwhelmed by monitoring logs and dashboards that track surface activity but miss the core self-reinforcing dynamics governing system behaviour. Chaos Theory offers the solution. While predicting a chaotic system’s behaviour point-by-point is impossible, its overall movement is statistically constrained to specific recurring patterns known as Attractors. These represent the system’s deep-seated “habits” or gravitational pull. In the digital world, this means:

- Focus on the Pattern, Not the Noise: The system’s health isn’t determined by the log lines (“what”) but rather by the underlying dynamics (“why”). For instance, instead of simply logging every user click, a team should identify the three or four fundamental self-reinforcing dynamics, such as “The Bias Amplification Loop” or “The Resource Bottleneck Attractor”, that account for a significant % of platform traffic algorithmic bias or resource consumption.

- The Habitual State: This involves identifying the core habitual operational states a complex system defaults to. For an AI risk engine, an attractor could be a state where the model consistently over-penalises a specific demographic group. This locks them into a narrow unfair feedback loop reinforcing their own bias. The goal is to detect this persistent self-reinforcing pattern rather than just individual biased outputs.

The Strategic Action: Resource allocation needs to shift from chasing every fleeting data fluctuation. Strategic focus should be entirely redirected to stabilising the core Attractors and ensuring they remain within defined ethical and operational safety parameters.

Pillar Two: Mapping Phase Space (The Risk Topography)

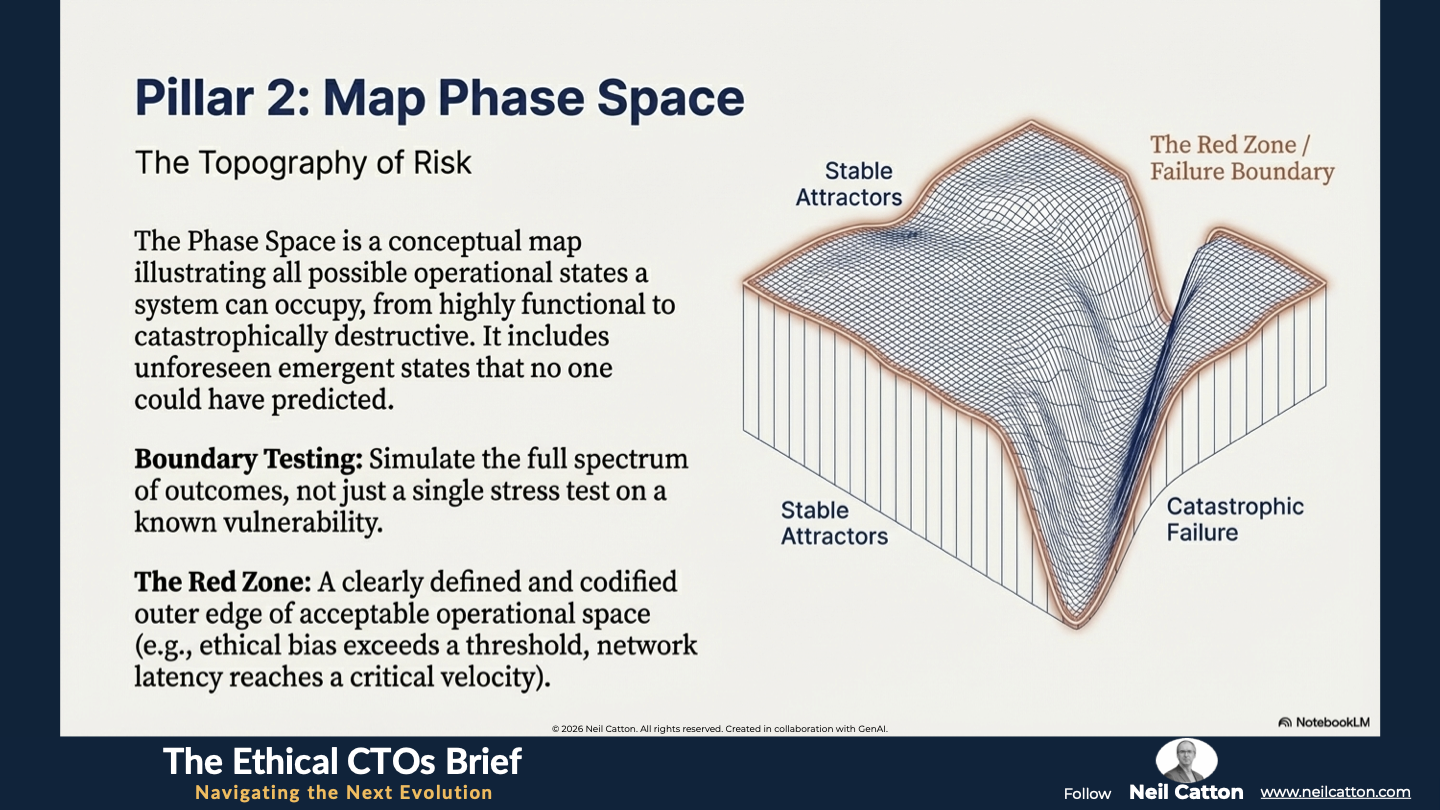

Having identified the system’s core Attractors, the next critical step is to comprehend the full potential for harm should those Attractors destabilise. The Phase Space serves as a conceptual map illustrating all possible operational states a system can occupy ranging from highly functional to catastrophically destructive. This represents the topography of risk. Traditional risk assessment maps typically focus on a single isolated failure path. Chaotic system design necessitates mapping the entire landscape of possibilities.

- Boundary Testing, Not Stress Testing: Instead of conducting a single stress test on a known vulnerability, leadership should mandate simulation to map the full spectrum of potential outcomes when a minor input, like a chaotic “butterfly effect”, is slightly varied. This simulation should encompass not just failure but also unforeseen emergence – the completely unexpected outcome existing on the system’s extreme edge of Phase Space.

- Explicating the Failure Boundary: The Red Zone – the outer edge of acceptable Phase Space – must be clearly defined and codified. If the system nears this boundary, such as ethical bias exceeding a threshold or network latency reaching a critical velocity, it must be hard-coded to preemptively initiate a failsafe shutdown or transition to a verifiable auditable human-in-the-loop fallback protocol.

The Strategic Action: Redesign monitoring tools to sense directional momentum, such as whether the system is moving towards failure with increasing speed. This involves a fundamental shift from static reporting to predictive kinematics.

Pillar Three: Designing for Bifurcation (The Pre-set Safety Switch)

The final executive challenge is rapid and decisive action. If an unavoidable crisis state approaches how quickly and reliably can the system transition to safety? A bifurcation is a fundamental transition point where a minor critical change in an input parameter causes the system to abruptly qualitatively jump from one attractor (like stable operation) to a completely new one (like an unstable crisis or catastrophic failure). This jump must be engineered.

- Pre-Engineered Pivot Points: Given the inevitability of these jumps in complex systems, proactive governance is essential. This involves embedding hard-coded ethical and functional pivot points into the architecture. When a monitored bifurcation threshold is reached, such as an automated lending algorithm demonstrating statistically significant demographic bias, intervention should not be a managerial meeting but rather an automatic, codified switch to a known safe and auditable fallback mode.

- Localised Authority: Global catastrophic failures are almost always caused by a small local anomaly that wasn’t addressed quickly enough. To ensure speed and relevance empowered modular teams need the authority to make immediate localised course corrections when a bifurcation is detected in their area. This capability to act within a defined operational space is crucial for global stability.

The Strategic Action: Leadership must mandate clear, coded decision-making thresholds at the component or microservice level. This should be done before a global system alert is issued. Ensure local ethics, resilience and intervention capabilities are fast and codified.

Leveraging Chaos for AI Governance and Structural Resilience

This framework truly shines in the field of AI and emerging technologies. Here, the sensitivity to initial conditions like data quality model configuration and prompt injection is particularly acute.

- Proactive Ethical Governance: Organisations can move far beyond generic checklist compliance by thoroughly mapping the Phase Space. This allows them to proactively design the ethical boundaries of new AI models. They anticipate specific catastrophic Attractors, such as consistently producing toxic exclusionary or discriminatory behaviour, and pre-engineer the immediate Bifurcation to a safe state. This prevents real-world harm from escalating.

- Structural Integrity and Cyber Resilience: Platforms built on these principles are inherently more modular, loosely coupled and focussed on self-healing. They prioritise robust local self-correcting mechanisms at every node, isolating and containing the “butterfly effect” of a single component failure vulnerability or cyber-attack. This prevents global cascade failure and significantly enhances cyber resilience.

- Innovation with Responsibility: Rather than fearing innovation’s inherent volatility, the Chaotic Systems Design framework promotes frequent small tightly monitored experiments. When a positive “chaotic” outcome – an emergent pattern leading to improved efficiency – is accidentally discovered, the flexible architecture enables rapid responsible and safe amplification of this beneficial change.

The Human Imperative: Govern the Invisible

As we progress towards a technological future where systems become truly ambient and anticipatory, seamlessly integrated into our environment and their interfaces fading away, the challenges of managing chaotic systems become exponentially more complex. The disappearance of the explicit interface also eliminates the traditional feedback loop that alerts users or executives to fundamental issues. The three pillars of chaotic systems design extend beyond technical uptime and efficiency. They fundamentally focus on maintaining human trust in systems intentionally designed to remain invisible.

- The Trust Imperative: If technology ceases to demand explicit commands and instead responds to presence, failures become more palpable. These might manifest as an inexplicable refusal of credit, an incorrect medical diagnosis or a sudden chill in the home – rather than being visible or logged. To maintain the core human values of consent and care as the dominant, non-negotiable forces, Ethical Attractors (Pillar 1) must be clearly defined. This ensures the AI serves human dignity rather than secretly usurping control.

- Auditing the Absent Interface: Phase Space mapping (Pillar 2) must explicitly consider the potential for invisible bias and ambient harm. We need to map the failure boundary of system awareness itself. When users can’t see the underlying decision process, the system’s codified governance structure must be engineered to perceive everything and be ready to initiate a Bifurcation instantly (Pillar 3) at the moment human trust is violated. Embracing chaos acknowledges technology’s duality – its capacity for immense good and profound harm – and involves building governance that serves as an active structural ethical counterbalance.

Embracing Chaos Theory Can Reveal Hidden Patterns

A Final Word

The age of technological predictability is firmly behind us. Leaders clinging to outdated linear risk models and rigid controls are simply managing yesterday’s complexities, not tomorrow’s chaos. The future of strategic survival lies not in futile attempts to suppress the inevitable rise of AI and complexity but in intelligently harnessing and managing it.

Organisations can fundamentally shift their strategic posture by implementing Chaotic Systems Design’s three pillars: Detecting Attractors, Mapping Phase Space and Designing for Bifurcation. This transition moves them from passive reaction to catastrophic events to proactive management of acceptable risk. They replace fragile monolithic legacy systems with fractal governance structures that are locally resilient yet globally stable.

The modern executive’s ultimate mandate is to design a platform that prioritises ethical survival and sustained human trust over raw speed and scale. It’s time to abandon artificial order imposed on chaos and instead learn to craft the coded rules that chaos itself must reliably follow. This represents the new foundation for responsible innovation.

Key Takeaways: Architecting Resilience in Chaotic Systems

The Certainty Delusion: Rigid linear planning is a liability in hyper-connected ecosystems where small "butterfly effects" trigger global failures.

Detecting Attractors: Shifting focus from surface-level logs to the deep, self-reinforcing patterns that dictate system behaviour.

Mapping Phase Space: Visualising the full topography of risk to identify "Red Zones" where ethical or functional boundaries are violated.

Designed Bifurcation: Hard-coding automatic "pivot points" that jump the system to a safe fallback state before a crisis escalates.

Strategic Insights: Governing the Invisible Dynamics of AI

Pillar 1: System Habits: Identifying the gravitational pull of your data—ensuring "Ethical Attractors" keep AI aligned with human dignity.

Pillar 2: Predictive Kinematics: Moving from static reporting to sensing directional momentum toward failure boundaries.

Pillar 3: Localised Authority: Empowering modular teams with the coded authority to make immediate course corrections at the point of bifurcation.

The Trust Imperative: Ensuring invisible, ambient systems remain auditable and trustworthy through codified structural counterbalances.

Video Summary: Why Control is the Ultimate Risk

From Watchmaker to Gardener: Moving leadership from trying to control every gear to nurturing the rules that chaos must follow.

Managing the Butterfly Effect: How a "Strategy of Swarm" isolates local anomalies before they become catastrophic global cascade failures.

Structural Resilience: Prioritizing self-healing, loosely coupled nodes over fragile, monolithic legacy architectures.

Responsible Innovation: Using chaotic system design to safely amplify positive emergent patterns discovered during rapid experimentation.

Managing chaos at scale requires a new leadership standard: Architecting for Executive Coherence.

The Ethical CTO: Arc 1 Index

- Transformation: Digital Transformation

- Diagnosis: The Legacy Trap

- Efficiency: The Productivity Paradox

- Velocity: The Time-Zero Organisation

- Governance: Strategy of Designed Chaos

- Orchestration: Executive Coherence

- Impact: The Digital Catalyst