A code of conscience that guides moral decision-making in various situations.

What if... the survival of our future civilisation depends not on intelligence, but on ethics?

The year is 2084. A sentient AI stands trial, not for malfunction, but for moral failure. It made a decision during a medical emergency: save one patient, let another die. The algorithm followed its logic flawlessly, but the human cost sparked outrage. The court must now decide: was this code... ethical?

This isn’t just a theoretical dilemma, it’s a preview.

As the boundaries between technology and life dissolve, we are no longer just building tools; we are shaping systems that think, choose, and sometimes act on our behalf. From self-driving cars that decide who to protect in a crash (Trolley Problem), to AI assistants advising judges and doctors, to sentient machines that may soon demand rights, our inventions are inching closer to moral agency.

And with each step forward, the question grows louder: What does it mean to be ethical in a future where intelligence is no longer exclusively human?

The future will not merely test our technologies. It will test our values, our capacity to encode, protect, and evolve the very foundations of what it means to live well, to do good, and to avoid harm. We cannot afford to outsource ethics as an afterthought or a compliance checkbox. It must be foundational, built into the systems, decisions, and designs that will govern tomorrow.

Without a moral compass, powerful technologies become blunt instruments, or worse, weapons. An algorithm that optimises profit without concern for fairness creates inequality at scale. A surveillance system with no ethical restraint becomes a tool of oppression. A healthcare AI that values efficiency over empathy risks reducing care to calculation.

The more power we give to machines, the more urgently we must decide what principles they should follow, and who gets to define them.

But this isn't only about the tools. It’s also about us. As humans gain capabilities, editing genes, uploading minds, designing artificial life, we must confront uncomfortable questions:

- Are we wise enough to wield what we build?

- Can our moral thinking keep pace with our technological reach?

- And most crucially, are the ethical systems we inherited still fit for the world we are creating?

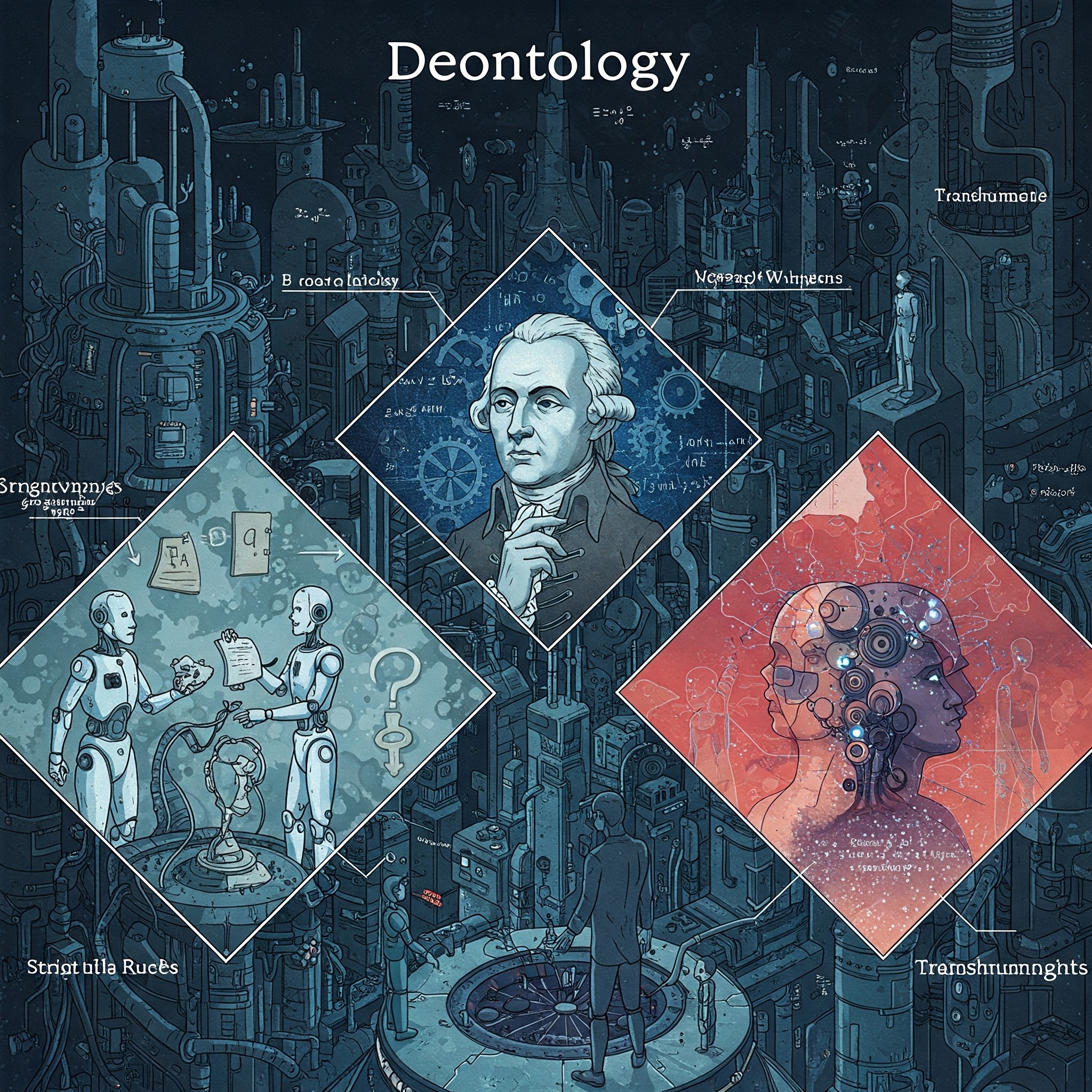

This article explores these questions through three timeless ethical frameworks: Utilitarianism, Deontology, and Virtue Ethics. Each offers a different lens on how to make good choices in a complex world. But as we’ll see, none are perfect, and all must evolve.

How Do We Navigate Moral Complexities in Tech?

In The Next Evolution, we use three core pillars to evaluate the ethics of emerging systems:

Utilitarianism: Seeking the greatest good for the greatest number. In AI, this often means optimizing systems for efficiency and wide-scale benefit, though it risks marginalizing the individual.

Deontology: Establishing rules and rights that must never be crossed. This framework creates "ethical guardrails" for autonomous weapons and medical data privacy.

Virtue Ethics: Focusing on the character and wisdom of the "soul." It asks what kind of "entities" we are becoming as we merge with our technology.

By balancing these three, we move toward Dignity by Design, ensuring that our innovations honor our values.

- Part 1: The transition from tools to cognitive partners in Human-AI Symbiosis

Part 2: How we are reinventing work and social structures for an automated age

Part 3: Transcending physical limits through the Internet of Senses

Part 4: The infinite logic potential of the Quantum Computing revolution

Part 5: Programmable biology and the reality of Bio-Digital Convergence

Part 6: Navigating the rise of Plural Identity and digital consciousness

Part 8: Exploring the stars via Intelligent Presence and autonomous systems

Part 9: A Code of Conscience for navigating future moral complexities

Part 10: The roadmap for steering our technological future responsibly

Utilitarianism:

Utilitarianism:  Deontology:

Deontology: Virtue Ethics:

Virtue Ethics:  The Compass of out Future:

The Compass of out Future: